If you’re searching for a clear explanation of how feed-based systems and publish subscribe architecture actually work in modern digital infrastructure, you’re in the right place. Many technical guides either stay too theoretical or dive so deep into code that they miss the bigger strategic picture. This article bridges that gap.

We’ll break down the core concepts behind feed-driven networks, explain how publish–subscribe models enable scalable, event-driven communication, and show how these patterns improve workflow efficiency and system resilience. Whether you’re optimizing internal processes, designing distributed systems, or evaluating infrastructure strategies, you’ll gain practical insights you can apply immediately.

Our analysis draws on real-world implementations, current protocol standards, and established best practices in distributed systems design. Instead of surface-level summaries, you’ll get structured explanations, clear examples, and expert-backed guidance to help you understand not just how these systems work—but why they matter for modern digital operations.

Decoupling Your Services: A Practical Guide to Pub/Sub Messaging

When services are tightly connected, one failure can ripple through your stack (and ruin your weekend). A publish subscribe architecture changes that by letting services communicate through events instead of direct calls. The benefit? Speed, resilience, and freedom to scale.

Here’s what you gain:

- Independent scaling – grow high-traffic services without touching others.

- Faster feature releases – add new subscribers without rewriting core logic.

- Improved fault tolerance – if one service fails, messages remain queued.

The result is a system that shares data efficiently, delivers real-time notifications, and supports innovation without constant refactoring.

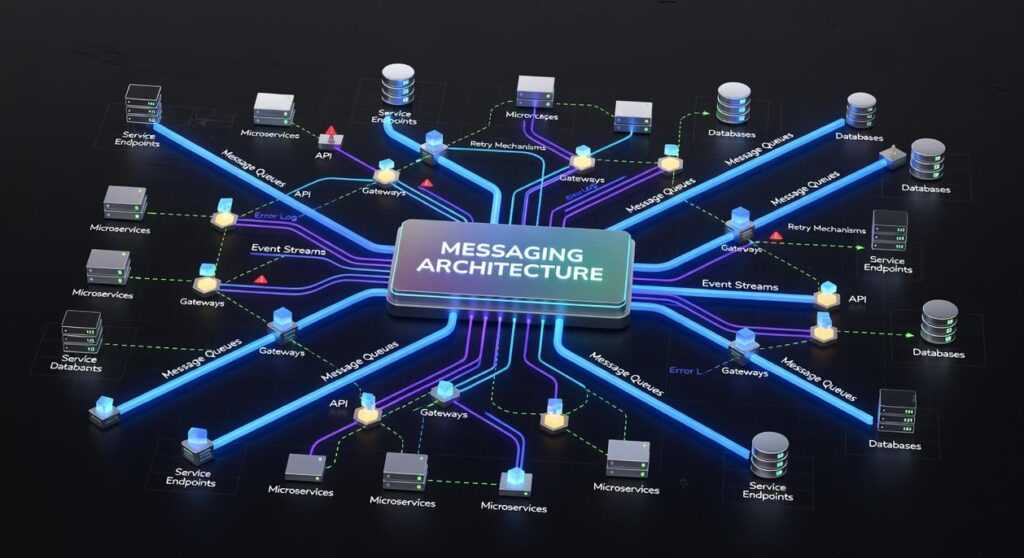

Understanding the Publish-Subscribe Architecture

The publish subscribe architecture is an asynchronous messaging pattern where publishers send messages without waiting for replies. They simply emit events to a central broker, which sorts them into topics or channels. Subscribers register interest in specific topics and automatically receive relevant updates.

Think of it like a radio broadcast. A station transmits on a frequency, and anyone tuned in can listen, while the station never tracks individual listeners.

In contrast, request-response APIs require a caller to wait for an answer before moving forward. That synchronous model works for direct queries, but it creates tight coupling. With Pub/Sub, producers and consumers stay decoupled, improving scalability.

For example, in an e-commerce app, an order service can publish an “order placed” event. Then, payment, inventory, and notification services subscribe and react independently. To implement this, start by defining clear topics, choose a reliable broker, and design idempotent consumers.

Real-time systems live or die by how quickly they move information. That’s where a publish subscribe architecture creates a strategic edge. Instead of hardwiring services together, you route events through a broker that distributes messages to anyone listening. As a result, you can scale horizontally by simply adding new subscribers, whether that’s a mobile app, analytics engine, or notification service.

Consider a live stock tracker. When price data is published, trading dashboards, alerting systems, and archival databases all receive updates instantly. If one subscriber fails, others continue unaffected. Meanwhile, brokers can buffer messages so temporary outages don’t cause data loss.

Practical Implementation Steps

First, define clear event schemas—structured message formats that ensure every subscriber interprets data consistently. Next, configure durable queues, which store messages until they’re acknowledged. Then, implement monitoring dashboards to track throughput, latency, and failure rates. Pro tip: start with small, well-defined topics instead of broad ones; this keeps scaling manageable.

For decentralized social platforms, see activitypub explained decentralized social networking protocols. Ultimately, this model improves responsiveness, strengthens resilience, and lets teams deploy independently—exactly what modern, real-time applications demand. In practice, that flexibility translates into faster releases and better user experiences across distributed ecosystems. At scale today.

Architecting Your System: Publishers, Subscribers, and Topics

The first time I wired up an event-driven system, I thought it would be simple. One service talks to another—done. Two weeks later, I had a tangled mess of direct API calls (and a newfound respect for clean architecture).

That’s when I truly understood publish subscribe architecture.

Publishers are any services that announce something happened. Think of a user service emitting a user.created event when someone signs up. The publisher doesn’t care who’s listening. It just reports the fact.

Subscribers are services that react. A welcome email service might subscribe to user.created. Meanwhile, a data analytics service could subscribe to the same topic to update dashboards. Same event, different reactions. Decoupled and clean.

Then come Topics (or Channels)—named message categories that organize communication. A clear naming structure like orders.shipped or notifications.user.mention prevents chaos later. (Trust me, vague topic names age poorly.)

Finally, the Message Broker acts as traffic control. Redis works well for lightweight, fast messaging. RabbitMQ shines when you need complex routing. Apache Kafka handles massive, high-throughput event streams (think Netflix-scale pipelines, per Confluent’s documentation).

Some argue direct APIs are simpler. Initially, yes. But as systems grow, event-driven models scale more gracefully—and with fewer 2 a.m. refactors.

Your Implementation Blueprint: From Setup to Scaling

Step 1: Choose Your Broker Technology

Start by asking the uncomfortable questions. “Do we actually need message persistence, or are we overengineering this?” one CTO once said in a planning meeting. Fair point.

Evaluate:

- High throughput (handling massive data streams)

- Low latency (minimal delay between send and receive)

- Durability (messages survive crashes)

Kafka excels at high-throughput event streaming. RabbitMQ is strong for flexible routing. Cloud-native tools like AWS SNS/SQS or Google Cloud Pub/Sub reduce infrastructure overhead. Some engineers argue, “Just pick the managed service and move on.” That works—until compliance, cost, or customization requirements say otherwise.

Step 2: Define Your Message Structure

Standardize with JSON and a consistent schema. For example:

{ "eventId": "abc123", "timestamp": "2026-02-25T10:00:00Z", "data": { "userId": 123 } }

An event ID (unique identifier), timestamp (when it occurred), and payload (actual data) are essential. Think of it as labeling every package before shipping (Amazon learned this the hard way in its early scaling days).

Step 3: Implement Publisher Logic

Pseudo-code:

connect(broker)

message = createMessage(schema)

publish(topic, message)

As one developer put it, “If your publisher isn’t predictable, your whole publish subscribe architecture collapses.”

Step 4: Implement Subscriber Logic

connect(broker)

subscribe(topic)

onMessage(message):

process(message.data)

acknowledge()

Step 5: Plan for Failure

Use acknowledgments (ACKs) to confirm processing. Without them, you’re guessing. Add a Dead-Letter Queue (DLQ) for repeated failures. Pro tip: monitor DLQ spikes—they’re early warning signals (like a check engine light for your system).

Start with an anecdote about a failed midnight deployment that froze every notification in our app. I remember staring at cascading errors, realizing our tightly coupled services were tripping over each other (like dominoes with egos).

The bottleneck was obvious: direct integrations meant one failure spread everywhere.

- Audit one critical event.

- Redesign it with a publisher, topic, and subscriber.

- Measure latency and resilience.

Shifting to a publish subscribe architecture decouples systems, meaning components communicate asynchronously—without waiting on each other (a sanity saver).

Pro tip: begin small, prove value, then expand. Growth follows flexibility for long term scale.

Build Smarter, Scale Faster with Modern Feed Systems

You came here to better understand how feed-based systems, workflow optimization, and publish subscribe architecture work together to create scalable, resilient digital infrastructure. Now you have a clearer picture of how these components reduce bottlenecks, improve real-time communication, and support growth without constant re-engineering.

If you’re feeling the strain of slow data delivery, fragile integrations, or workflows that break under scale, you’re not alone. These pain points are exactly why modern feed-driven systems and publish subscribe architecture models exist — to eliminate tight coupling, improve performance, and future-proof your stack.

The next step is simple: evaluate your current infrastructure for bottlenecks, identify where event-driven patterns can replace rigid workflows, and start implementing a scalable feed strategy. Don’t wait for performance issues to escalate.

Join thousands of tech leaders who are optimizing their systems with proven, feed-based strategies. Subscribe now for expert breakdowns, actionable infrastructure insights, and step-by-step guidance to build faster, more resilient digital systems.