If you’re searching for a clear, practical microservices architecture breakdown, you’re likely trying to understand how distributed systems actually work in real-world environments—not just in theory. Modern applications demand scalability, resilience, and rapid deployment cycles, yet many teams struggle to connect high-level concepts with implementation strategies.

This article is designed to bridge that gap. We’ll unpack core components, explain how services communicate, examine data management strategies, and explore how feed-based network protocols and workflow optimization principles fit into the bigger picture. Whether you’re refining an existing system or planning a new one, you’ll gain clarity on how microservices function as an integrated ecosystem.

To ensure accuracy and practical value, this guide draws on industry-documented best practices, infrastructure case studies, and proven digital architecture patterns used in production environments. By the end, you’ll have a structured understanding of how microservices are designed, deployed, and optimized for long-term performance.

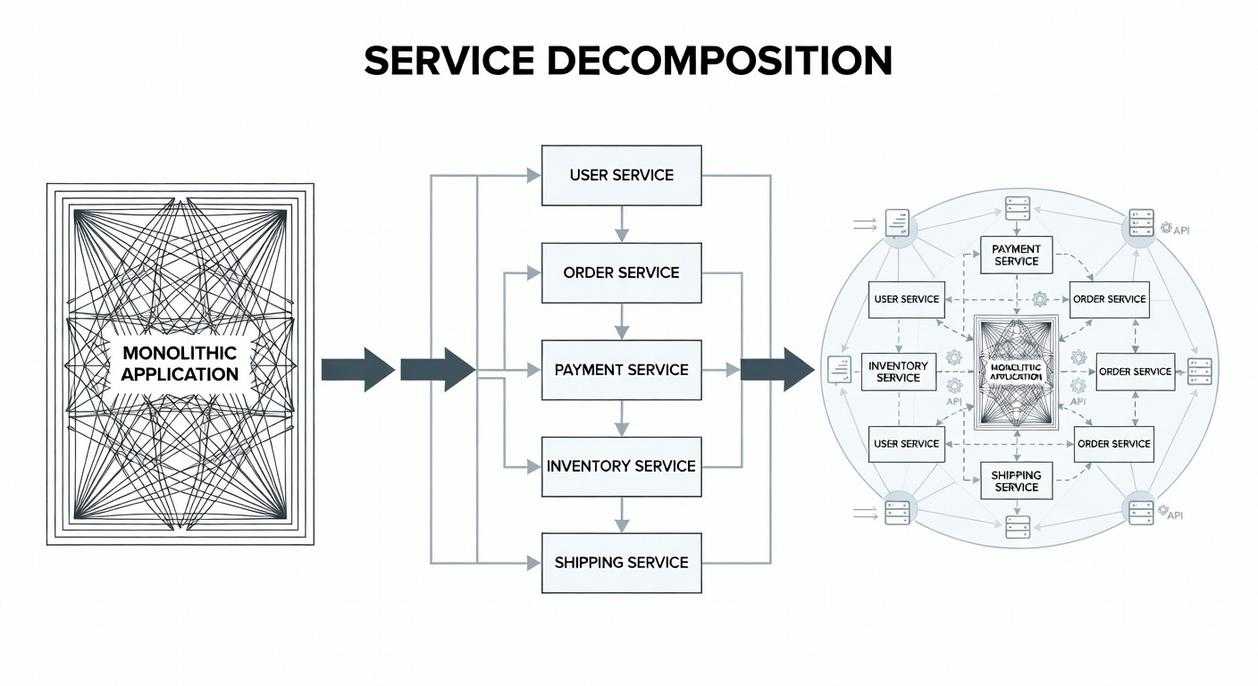

Monolithic architecture means one large codebase where all features live together. It’s simple at first—but like cramming every department into one office, growth gets messy. A microservices model splits that giant system into smaller, independent services that communicate through APIs (application programming interfaces, or rule-based connectors).

Think of each service as its own mini‑app. If one fails, the others keep running.

This microservices architecture breakdown clarifies:

- Core components: services, APIs, databases

- Communication patterns: synchronous calls vs. event streams

Critics argue distributed systems add complexity. True. But that trade-off often buys scalability, faster updates, and clearer workflows.

The Foundational Pillars of a Distributed System

Distributed systems succeed or fail on their foundations. First, Single Responsibility & Bounded Context means each service owns one clear business capability. A bounded context defines the logical boundary within which a model applies. For example, Amazon separates payments, inventory, and recommendations into distinct services to reduce coupling (Amazon’s engineering case studies, 2019). Critics argue this creates too many moving parts. However, research from NIST shows modular isolation improves fault containment and recovery times in complex systems (NIST SP 800-204).

Next, Independent Deployability ensures services can be updated without redeploying the entire platform. Netflix demonstrated this with thousands of daily production deployments while maintaining uptime (Netflix Tech Blog). In practice, this requires strong CI/CD pipelines and contract testing.

- Clear API contracts

- Automated integration tests

- Backward-compatible changes

Then comes Decentralized Governance. Teams choose the best tools for their service. While some fear tech sprawl, ThoughtWorks reports higher team velocity when autonomy is balanced with lightweight standards.

Finally, Decentralized Data Management embraces the database-per-service pattern. Although critics worry about consistency, patterns like eventual consistency and saga orchestration mitigate risk. This microservices architecture breakdown proves autonomy, when disciplined, drives resilience.

Mapping the Key Components and Their Interactions

When people talk about microservices, they often focus on speed and scalability. Fair. But in my experience, the real value comes from clarity—knowing exactly how each piece fits together. Think of it less like a monolith and more like an ensemble cast where every character has a defined role (yes, even the quiet one).

The Services Themselves

A well-designed microservice is small, autonomous, and built around a single business capability. Autonomous means it can be deployed independently without breaking the rest of the system. In practice, that might look like a payment service updating its fraud rules without forcing a full application redeploy.

Still, critics argue that microservices add unnecessary complexity. They’re not wrong—poorly scoped services create chaos. But when boundaries are clear, complexity becomes manageable rather than overwhelming.

The API Gateway

The API Gateway acts as the single entry point for clients. It handles:

- Request routing to the correct service

- Request composition (aggregating multiple responses)

- Authentication and authorization

- SSL termination

Instead of every service worrying about security, the gateway centralizes it. If you want a deeper dive, explore understanding api gateways and their role in system design.

Some engineers prefer direct service calls to reduce latency. I get that. But I’d argue the operational simplicity of a gateway often outweighs minor performance trade-offs.

Service Discovery Mechanisms

In dynamic cloud environments, services move. Service discovery ensures they can still find each other.

- Client-side discovery: The client queries a service registry directly.

- Server-side discovery: A load balancer queries the registry on the client’s behalf.

A service registry is simply a database of available service instances and their locations.

Containerization and Orchestration (Docker & Kubernetes)

Containers package applications with dependencies. Kubernetes orchestrates them—handling scaling, deployment, and health checks. Without orchestration, managing dozens of services becomes a logistical nightmare.

Observability (Logging, Metrics, Tracing)

Observability means understanding system behavior through:

- Logging: Recorded events

- Metrics: Numerical performance data

- Tracing: Tracking a request across services

Honestly, no microservices architecture breakdown is complete without observability. Distributed systems fail in creative ways (they always do). Robust visibility isn’t optional—it’s survival.

Analyzing Network Protocols and Data Flow Strategies

Synchronous vs. Asynchronous Communication

Synchronous communication (like RESTful APIs) means one service sends a request and waits for a response. Think of it like a phone call—you stay on the line until you get an answer. This works well for real-time needs such as authentication checks or payment confirmations where immediate feedback matters.

Asynchronous communication uses message brokers like RabbitMQ or Kafka. Instead of waiting, a service publishes an event and moves on. Other services react when ready. It’s more like sending a text—you don’t pause your life waiting for the reply. This model shines in high-traffic systems, order processing pipelines, and notifications.

Choose synchronous for simplicity and immediate consistency. Choose asynchronous for scale and resilience. (If your system crashes because one service sneezed, you picked wrong.)

Event-Driven Architecture Deep Dive

Event-driven architecture decouples services by letting them react to events instead of direct calls. This improves fault isolation and horizontal scalability. In choreography, services react independently to events. In orchestration, a central coordinator directs the workflow. Choreography reduces central bottlenecks but can become hard to trace. Orchestration improves visibility but adds dependency risk.

Solving for Data Consistency

Distributed transactions are tricky. The Saga pattern breaks them into smaller steps with compensating actions. If one step fails, previous steps roll back logically. It’s practical for maintaining integrity across services without locking everything down.

Optimizing Workflows with the Right Protocol

Protocol choice affects latency, fault tolerance, and complexity. REST is straightforward but tightly coupled. Event streams improve durability and scaling but require observability tools. A clear microservices architecture breakdown helps teams map dependencies before choosing. Pro tip: monitor queue depth and response times early—small delays compound fast.

Building a Resilient and Scalable Digital Infrastructure

At first, I underestimated the core trade-off. Microservices promise scalability and agility—the ability to deploy features independently and scale only what’s needed. However, that flexibility comes with operational complexity. More services mean more monitoring, more networking, and more failure points (and yes, they will fail at 2 a.m.).

That’s why a structural integrity checklist matters. An API Gateway centralizes requests and reduces client-side chaos. Service Discovery ensures services can dynamically find each other without hardcoded endpoints. Decentralized Data prevents tight coupling at the database level. Miss one of these, and the system wobbles.

Here’s where many teams stumble: they treat architecture as purely technical. Conway’s Law states that systems mirror organizational communication structures (Melvin Conway, 1967). If teams aren’t aligned, your microservices architecture breakdown will reflect that misalignment.

So before defining service boundaries, analyze business capabilities and team workflows. Structure follows strategy—not the other way around.

Build Smarter, Scalable Systems Starting Today

You came here to better understand how modern systems scale, communicate, and stay resilient—and now you have a clearer picture of how feed-based network protocols, workflow optimization strategies, and a microservices architecture breakdown fit together to support high-performing digital infrastructure.

If you’ve been struggling with bottlenecks, rigid monoliths, or inefficient data flow, you’re not alone. Fragmented systems and unclear architecture decisions slow innovation and increase operational risk. The good news? With the right structural approach, you can eliminate those constraints and build systems designed for adaptability and growth.

Now it’s time to act. Audit your current architecture, identify workflow friction points, and start implementing modular, feed-driven components that improve scalability and resilience. Teams that adopt structured microservices strategies report faster deployment cycles and more reliable system performance.

Don’t let outdated infrastructure hold you back. Take the next step toward a streamlined, future-ready architecture—optimize your workflows, refine your service boundaries, and build a system that scales with confidence.