I read about 50 tech headlines before breakfast most days.

You know what? Maybe three of them actually matter.

The rest is noise. Product launches that won’t change anything. Funding rounds for companies that’ll be gone in two years. Features that sound cool but solve nothing.

You’re here because you want to cut through that mess. You need to know what’s actually shifting in tech news feedworldtech without spending hours sorting through everything.

I focus on the foundational changes. The ones that ripple through digital infrastructure and change how systems actually work. Not the flashy stuff that gets clicks.

This article gives you a framework for filtering what matters. I’ll show you which developments have real weight and which ones you can ignore.

My background is in digital infrastructure and network architecture. That means I look at the why behind the headlines, not just the what. I care about the technical substance that most coverage glosses over.

You’ll walk away knowing how to spot the difference between a real shift and just another trend cycle.

No hype. Just the tech news that has legs.

The Infrastructure Layer: AI’s Unquenchable Thirst for Power and Silicon

Everyone’s talking about the next big AI model.

But I’m watching something else entirely.

The real story isn’t happening in research labs. It’s happening in massive warehouses across the globe where companies are building the physical backbone that makes AI possible.

And here’s what most people miss. We’re hitting a wall.

Some experts say we should slow down and focus on making existing models more efficient. They argue that this infrastructure arms race is wasteful and unsustainable. That we’re building too much too fast.

I disagree.

The companies pouring billions into data centers and chips aren’t being reckless. They’re reading the same signals I am. Demand for compute power isn’t slowing down. It’s accelerating.

Here’s what you need to watch.

Data centers are going through their biggest transformation in decades. Traditional air cooling can’t handle the heat output from modern AI chips anymore. So the industry is shifting to liquid cooling systems (the kind you’d see in high-performance gaming rigs, but scaled up massively).

This isn’t a small change. It requires completely redesigning facilities from the ground up.

At the same time, there’s a scramble for power. AI workloads consume electricity at rates that would make your local utility nervous. According to tech news feedworldtech reports, some new data centers require as much power as a small city.

That’s forcing a geographic shift. Companies are moving computing resources closer to energy sources rather than closer to users.

Then there’s the silicon itself.

NVIDIA gets most of the attention, but the real action is in custom chips. Google has its TPUs. Amazon built Trainium and Inferentia. Even Microsoft is designing its own hardware now.

Why does this matter to you?

Because the semiconductor supply chain has become a geopolitical chess game. Taiwan produces most advanced chips. China wants that capability. The US is spending billions to build domestic production.

If you’re planning any AI strategy, you need to account for these realities. Compute costs aren’t coming down anytime soon. Access to cutting-edge hardware might get harder, not easier.

My recommendation is simple.

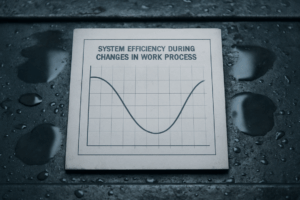

Stop assuming infinite compute at decreasing prices. That era is over. Start thinking about efficiency from day one. Choose models that match your actual needs instead of defaulting to the biggest option available. As the gaming landscape evolves beyond the era of infinite compute at decreasing prices, it’s essential to embrace strategies that prioritize efficiency from day one, a principle echoed by industry leaders like Feedworldtech. As the gaming industry shifts towards a more sustainable future, it’s essential to embrace strategies like those advocated by Feedworldtech, which emphasize efficiency and tailored model selection over the outdated notion of unlimited compute resources.

And if you’re building something serious? Lock in your infrastructure partnerships now before capacity gets even tighter.

The Protocol Revolution: How Data Moves in the Modern Era

You’ve probably noticed that some apps just feel faster than others.

It’s not your imagination.

The way data moves across the internet is changing. And most people have no idea it’s happening.

Here’s what I mean. The old protocols we’ve used for decades (TCP is the big one) were built for a different internet. They work fine for loading web pages. But when you’re streaming a live video call or running AI models that need instant responses, they start to break down.

The problem isn’t obvious at first. You just notice a slight lag. A buffering wheel that spins a second too long. A chat message that takes forever to send.

Now, some people will tell you that TCP is fine. That we should stick with what works. After all, it’s been the backbone of the internet since the 1970s and hasn’t failed us yet.

Fair point.

But think about what we’re asking it to do now. Real-time gaming. 4K video streams. IoT devices sending constant updates. AI applications that need to process data instantly.

TCP wasn’t designed for any of that.

That’s where QUIC comes in. It’s a newer protocol that Google developed and it’s changing how we think about internet connections. QUIC (which stands for Quick UDP Internet Connections) does something clever. It reduces the back-and-forth handshakes that slow down TCP.

What does that actually mean for you?

Faster connection times. Better performance on spotty networks. And built-in encryption that doesn’t add extra delays.

HTTP/3 builds on top of QUIC. If you’ve ever wondered why some websites load almost instantly while others crawl, this is part of the answer. Sites using HTTP/3 can recover from packet loss without grinding to a halt.

The shift is already happening. According to W3Techs, over 30% of websites now support HTTP/3. That number keeps climbing.

Feed-based platforms benefit the most. Think about scrolling through your social feed. You want new content to appear instantly. No waiting. No stuttering. The tech news feedworldtech covers shows how these protocols make that seamless experience possible.

So what should you do if you’re building something?

Start testing QUIC if you’re handling real-time data or serving mobile users. Most modern browsers already support it. The implementation isn’t as hard as you’d think.

For IoT projects, the difference is even bigger. Devices with unreliable connections can maintain stable data streams without constant reconnections.

The internet is getting faster. But only if you’re using the right protocols to move your data.

Workflow’s New Frontier: The Rise of Autonomous AI Agents

I watched something weird happen last month.

One of my clients called me at 2 AM (not ideal) because their entire deployment pipeline had broken. By the time I logged in to help, the problem was already fixed. Not by their team. By an AI agent they’d set up three weeks earlier.

It had identified the bug, tested three different solutions, and pushed the fix to production. All while everyone was asleep.

That’s not automation. That’s something different.

From Automation to Autonomy

Most people think Zapier is AI. It’s not. It’s just if-this-then-that logic dressed up nice.

Real AI agents? They make decisions. They adapt when things go wrong. They handle workflows that have dozens of steps and multiple possible outcomes. As the gaming industry increasingly harnesses the power of real AI agents that can adapt to complex workflows and unpredictable scenarios, enthusiasts should stay informed through platforms like the World News Feedworldtech to understand the implications of these advancements. As the gaming industry increasingly harnesses the power of real AI agents that can adapt to complex workflows and unpredictable scenarios, enthusiasts can stay informed about the latest developments through platforms like World News Feedworldtech.

The difference matters. A Zapier workflow breaks the moment something unexpected happens. An AI agent figures it out.

Real-World Applications

I’ve seen agents in software development that scan codebases for vulnerabilities, then write and test patches before a human even knows there’s a problem. Some companies are running these 24/7 (because bugs don’t sleep).

In logistics, agents are managing supply chains in real time. When a shipment gets delayed, they reroute inventory and update delivery schedules across multiple systems. No human intervention needed. For the full picture, I lay it all out in Wearables Feedworldtech.

Corporate finance teams are using agents to run analyses that used to take analysts days. The agent pulls data from different sources, runs models, and generates reports. Then it waits for someone to review before taking action.

You can find more examples in news feedworldtech coverage of how companies are actually deploying these systems.

Optimizing for an Agentic Workforce

Here’s what nobody tells you. Your data needs to be clean for this to work.

Agents can’t make good decisions if they’re working with messy information. I’ve watched companies spend months preparing their systems before they could even test an agent.

The platforms enabling this shift are getting better. Tools like LangChain and AutoGPT give you frameworks to build agents that can handle complex tasks. But you still need solid APIs, clear documentation, and data that makes sense.

The Human-in-the-Loop

Some people worry agents will replace them. I think that’s backwards.

The best implementations I’ve seen keep humans in charge. The agent does the grunt work and flags decisions that need judgment. Then a person reviews and approves.

It’s faster than doing everything manually. But it’s safer than letting AI run wild.

Think of it like having an assistant who never sleeps but still needs your approval for big moves.

Edge Computing and the Decentralized Web: Bringing Compute Closer to Home

Here’s the problem with cloud computing.

Every time you ask Alexa a question or your smart doorbell detects motion, that data travels hundreds of miles to a server farm. Then it comes all the way back to you.

For most things, that works fine. But when you need split-second responses? It falls apart.

Think about autonomous vehicles. A self-driving car can’t wait 200 milliseconds for a cloud server to process whether that’s a pedestrian or a shadow. By then, it’s too late.

So what’s the edge?

It’s simple. Instead of sending data to distant servers, you process it right where it’s created. On the device itself or on a nearby local server.

Your phone analyzing your face to unlock. A factory robot adjusting its grip in real time. A retail camera recognizing inventory gaps without uploading footage to the cloud.

According to recent tech news feedworldtech reports, companies like NVIDIA and Intel are shipping new edge processors that can handle AI workloads locally. We’re talking chips small enough for a security camera but powerful enough to run facial recognition on the spot.

The shift is already happening in manufacturing. Factories are installing edge servers on the floor that monitor equipment and predict failures before they happen. No cloud lag. No bandwidth costs.

Retailers are using edge computing to track customer movement and adjust displays in real time (without the creepy factor of sending your face to some data center).

Telecom companies are building edge nodes at cell towers so your video calls don’t have to bounce across the country. As telecom companies enhance their infrastructure by establishing edge nodes at cell towers, the innovations highlighted by Feedworldtech promise to revolutionize video calls, ensuring they remain seamless and efficient without unnecessary delays. As telecom companies enhance their infrastructure by establishing edge nodes at cell towers, the innovations highlighted by Feedworldtech are set to revolutionize video calls, ensuring seamless connectivity and crystal-clear communication for users everywhere.

The result? Faster responses and better privacy.

Your data stays closer to home.

A Clear Signal in a World of Noise

You now have a strategic lens for cutting through the chaos.

Instead of drowning in an endless feed of headlines, you can spot the trends that actually matter. The ones with staying power.

I built this framework because I was tired of watching people chase shiny objects. Every new app launch isn’t worth your attention. Every funding announcement doesn’t signal a shift.

But infrastructure changes? Protocol updates? New workflows that change how we build? Those are the signals you need.

This approach works because it focuses on the foundational layers. When you understand the core technological shifts, you can see what’s coming next. You’re not reacting anymore. You’re anticipating.

Here’s what to do: Apply this filter to your daily news consumption. Ask yourself if what you’re reading touches infrastructure, protocols, or workflows. If it doesn’t, move on.

Your career decisions get sharper. Your business strategy gets clearer.

The noise will always be there. But now you know what to listen for.

Start filtering your tech news feedworldtech intake today and watch how quickly the important patterns emerge. World News Feedworldtech.

Tavien Zolmuth is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to digital infrastructure strategies through years of hands-on work rather than theory, which means the things they writes about — Digital Infrastructure Strategies, Tech Workflow Optimization Tips, Expert Breakdowns, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Tavien's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Tavien cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Tavien's articles long after they've forgotten the headline.

Tavien Zolmuth is the kind of writer who genuinely cannot publish something without checking it twice. Maybe three times. They came to digital infrastructure strategies through years of hands-on work rather than theory, which means the things they writes about — Digital Infrastructure Strategies, Tech Workflow Optimization Tips, Expert Breakdowns, among other areas — are things they has actually tested, questioned, and revised opinions on more than once.

That shows in the work. Tavien's pieces tend to go a level deeper than most. Not in a way that becomes unreadable, but in a way that makes you realize you'd been missing something important. They has a habit of finding the detail that everybody else glosses over and making it the center of the story — which sounds simple, but takes a rare combination of curiosity and patience to pull off consistently. The writing never feels rushed. It feels like someone who sat with the subject long enough to actually understand it.

Outside of specific topics, what Tavien cares about most is whether the reader walks away with something useful. Not impressed. Not entertained. Useful. That's a harder bar to clear than it sounds, and they clears it more often than not — which is why readers tend to remember Tavien's articles long after they've forgotten the headline.